#include "Target/AArch64/AArch64TargetTransformInfo.h"

Detailed Description

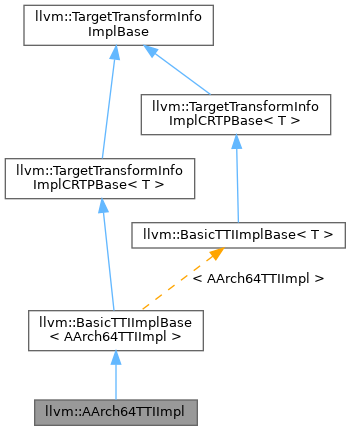

Definition at line 42 of file AArch64TargetTransformInfo.h.

Constructor & Destructor Documentation

◆ AArch64TTIImpl()

|

inlineexplicit |

Definition at line 73 of file AArch64TargetTransformInfo.h.

Member Function Documentation

◆ areInlineCompatible()

Definition at line 234 of file AArch64TargetTransformInfo.cpp.

References llvm::TargetLoweringBase::getTargetMachine(), hasPossibleIncompatibleOps(), llvm::SMEAttrs::hasStreamingBody(), llvm::SMEAttrs::isNewZA(), llvm::SMEAttrs::set(), llvm::SMEAttrs::SM_Compatible, llvm::SMEAttrs::SM_Enabled, and TM.

◆ areTypesABICompatible()

| bool AArch64TTIImpl::areTypesABICompatible | ( | const Function * | Caller, |

| const Function * | Callee, | ||

| const ArrayRef< Type * > & | Types | ||

| ) | const |

Definition at line 266 of file AArch64TargetTransformInfo.cpp.

References llvm::any_of(), llvm::TargetTransformInfoImplBase::areTypesABICompatible(), and llvm::AArch64Subtarget::useSVEForFixedLengthVectors().

◆ enableInterleavedAccessVectorization()

|

inline |

Definition at line 107 of file AArch64TargetTransformInfo.h.

◆ enableMaskedInterleavedAccessVectorization()

|

inline |

Definition at line 109 of file AArch64TargetTransformInfo.h.

◆ enableMemCmpExpansion()

| AArch64TTIImpl::TTI::MemCmpExpansionOptions AArch64TTIImpl::enableMemCmpExpansion | ( | bool | OptSize, |

| bool | IsZeroCmp | ||

| ) | const |

Definition at line 3133 of file AArch64TargetTransformInfo.cpp.

References llvm::TargetLoweringBase::getMaxExpandSizeMemcmp(), and Options.

◆ enableOrderedReductions()

|

inline |

Definition at line 344 of file AArch64TargetTransformInfo.h.

◆ enableScalableVectorization()

|

inline |

Definition at line 378 of file AArch64TargetTransformInfo.h.

◆ enableSelectOptimize()

|

inline |

Definition at line 414 of file AArch64TargetTransformInfo.h.

◆ getAddressComputationCost()

| InstructionCost AArch64TTIImpl::getAddressComputationCost | ( | Type * | Ty, |

| ScalarEvolution * | SE, | ||

| const SCEV * | Ptr | ||

| ) |

Definition at line 3023 of file AArch64TargetTransformInfo.cpp.

References llvm::TargetTransformInfoImplBase::isConstantStridedAccessLessThan(), llvm::Type::isVectorTy(), NeonNonConstStrideOverhead, and Ptr.

◆ getArithmeticInstrCost()

| InstructionCost AArch64TTIImpl::getArithmeticInstrCost | ( | unsigned | Opcode, |

| Type * | Ty, | ||

| TTI::TargetCostKind | CostKind, | ||

| TTI::OperandValueInfo | Op1Info = {TTI::OK_AnyValue, TTI::OP_None}, |

||

| TTI::OperandValueInfo | Op2Info = {TTI::OK_AnyValue, TTI::OP_None}, |

||

| ArrayRef< const Value * > | Args = ArrayRef<const Value *>(), |

||

| const Instruction * | CxtI = nullptr |

||

| ) |

Definition at line 2850 of file AArch64TargetTransformInfo.cpp.

References llvm::ISD::ADD, llvm::ISD::AND, CostKind, llvm::CostTableLookup(), llvm::BasicTTIImplBase< AArch64TTIImpl >::DL, llvm::ISD::FADD, llvm::ISD::FDIV, llvm::ISD::FMUL, llvm::ISD::FNEG, llvm::ISD::FREM, llvm::ISD::FSUB, getArithmeticInstrCost(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getArithmeticInstrCost(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getCallInstrCost(), llvm::TargetTransformInfo::OperandValueInfo::getNoProps(), llvm::Type::getScalarType(), llvm::EVT::getSimpleVT(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost(), llvm::TargetLoweringBase::getValueType(), llvm::TargetLoweringBase::InstructionOpcodeToISD(), llvm::Type::isBFloatTy(), llvm::TargetTransformInfo::OperandValueInfo::isConstant(), llvm::Type::isFP128Ty(), llvm::Type::isHalfTy(), llvm::TargetLoweringBase::isOperationLegalOrCustom(), llvm::TargetTransformInfo::OperandValueInfo::isPowerOf2(), llvm::TargetTransformInfo::OperandValueInfo::isUniform(), llvm::Type::isVectorTy(), llvm::ISD::MUL, llvm::ISD::MULHU, llvm::ISD::OR, llvm::ISD::SDIV, llvm::ISD::SHL, llvm::ISD::SRA, llvm::ISD::SRL, llvm::TargetTransformInfo::TCK_RecipThroughput, llvm::ISD::UDIV, and llvm::ISD::XOR.

Referenced by getArithmeticInstrCost(), getArithmeticReductionCost(), getArithmeticReductionCostSVE(), and getIntrinsicInstrCost().

◆ getArithmeticReductionCost()

| InstructionCost AArch64TTIImpl::getArithmeticReductionCost | ( | unsigned | Opcode, |

| VectorType * | Ty, | ||

| std::optional< FastMathFlags > | FMF, | ||

| TTI::TargetCostKind | CostKind | ||

| ) |

Definition at line 3661 of file AArch64TargetTransformInfo.cpp.

References llvm::ISD::ADD, llvm::ISD::AND, assert(), CostKind, llvm::CostTableLookup(), llvm::FixedVectorType::get(), getArithmeticInstrCost(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getArithmeticReductionCost(), getArithmeticReductionCostSVE(), llvm::VectorType::getElementType(), llvm::InstructionCost::getInvalid(), getMaxNumElements(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost(), llvm::MVT::getVectorNumElements(), llvm::TargetLoweringBase::InstructionOpcodeToISD(), llvm::isPowerOf2_32(), llvm::ISD::OR, llvm::TargetTransformInfo::requiresOrderedReduction(), and llvm::ISD::XOR.

◆ getArithmeticReductionCostSVE()

| InstructionCost AArch64TTIImpl::getArithmeticReductionCostSVE | ( | unsigned | Opcode, |

| VectorType * | ValTy, | ||

| TTI::TargetCostKind | CostKind | ||

| ) |

Definition at line 3635 of file AArch64TargetTransformInfo.cpp.

References llvm::ISD::ADD, llvm::ISD::AND, assert(), CostKind, llvm::ISD::FADD, getArithmeticInstrCost(), llvm::Type::getContext(), llvm::InstructionCost::getInvalid(), llvm::EVT::getTypeForEVT(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost(), llvm::TargetLoweringBase::InstructionOpcodeToISD(), llvm::ISD::OR, and llvm::ISD::XOR.

Referenced by getArithmeticReductionCost().

◆ getCastInstrCost()

| InstructionCost AArch64TTIImpl::getCastInstrCost | ( | unsigned | Opcode, |

| Type * | Dst, | ||

| Type * | Src, | ||

| TTI::CastContextHint | CCH, | ||

| TTI::TargetCostKind | CostKind, | ||

| const Instruction * | I = nullptr |

||

| ) |

Definition at line 2298 of file AArch64TargetTransformInfo.cpp.

References assert(), llvm::ISD::BITCAST, llvm::EVT::bitsGT(), llvm::ConvertCostTableLookup(), CostKind, llvm::BasicTTIImplBase< AArch64TTIImpl >::DL, llvm::ISD::FP_EXTEND, llvm::ISD::FP_ROUND, llvm::ISD::FP_TO_SINT, llvm::ISD::FP_TO_UINT, llvm::ScalableVectorType::get(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getCastInstrCost(), getCastInstrCost(), llvm::EVT::getSimpleVT(), llvm::TargetLoweringBase::getTypeAction(), llvm::EVT::getTypeForEVT(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost(), llvm::TargetLoweringBase::getValueType(), llvm::EVT::getVectorNumElements(), llvm::AArch64Subtarget::hasSVEorSME(), I, llvm::TargetLoweringBase::InstructionOpcodeToISD(), isExtPartOfAvgExpr(), llvm::EVT::isFixedLengthVector(), llvm::EVT::isSimple(), llvm::TargetLoweringBase::isTypeLegal(), llvm::TargetTransformInfo::Masked, llvm::TargetTransformInfo::None, llvm::TargetTransformInfo::Normal, Operands, llvm::ISD::SIGN_EXTEND, llvm::ISD::SINT_TO_FP, llvm::AArch64::SVEBitsPerBlock, llvm::TargetTransformInfo::TCK_RecipThroughput, llvm::ISD::TRUNCATE, llvm::TargetLoweringBase::TypePromoteInteger, llvm::TargetLoweringBase::TypeSplitVector, llvm::ISD::UINT_TO_FP, llvm::AArch64Subtarget::useSVEForFixedLengthVectors(), and llvm::ISD::ZERO_EXTEND.

Referenced by getCastInstrCost(), getExtractWithExtendCost(), and getSpliceCost().

◆ getCFInstrCost()

| InstructionCost AArch64TTIImpl::getCFInstrCost | ( | unsigned | Opcode, |

| TTI::TargetCostKind | CostKind, | ||

| const Instruction * | I = nullptr |

||

| ) |

Definition at line 2759 of file AArch64TargetTransformInfo.cpp.

References assert(), CostKind, and llvm::TargetTransformInfo::TCK_RecipThroughput.

◆ getCmpSelInstrCost()

| InstructionCost AArch64TTIImpl::getCmpSelInstrCost | ( | unsigned | Opcode, |

| Type * | ValTy, | ||

| Type * | CondTy, | ||

| CmpInst::Predicate | VecPred, | ||

| TTI::TargetCostKind | CostKind, | ||

| const Instruction * | I = nullptr |

||

| ) |

Definition at line 3042 of file AArch64TargetTransformInfo.cpp.

References llvm::any_of(), llvm::CmpInst::BAD_ICMP_PREDICATE, llvm::ConvertCostTableLookup(), CostKind, llvm::BasicTTIImplBase< AArch64TTIImpl >::DL, llvm::CmpInst::FCMP_OEQ, llvm::CmpInst::FCMP_OGE, llvm::CmpInst::FCMP_OGT, llvm::CmpInst::FCMP_OLE, llvm::CmpInst::FCMP_OLT, llvm::CmpInst::FCMP_UNE, llvm::BasicTTIImplBase< AArch64TTIImpl >::getCmpSelInstrCost(), llvm::EVT::getSimpleVT(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost(), llvm::TargetLoweringBase::getValueType(), I, llvm::TargetLoweringBase::InstructionOpcodeToISD(), llvm::ICmpInst::isEquality(), llvm::Type::isIntegerTy(), llvm::CmpInst::isIntPredicate(), llvm::EVT::isSimple(), llvm::TargetLoweringBase::isTypeLegal(), llvm::PatternMatch::m_And(), llvm::PatternMatch::m_Cmp(), llvm::PatternMatch::m_Select(), llvm::PatternMatch::m_Value(), llvm::PatternMatch::m_Zero(), llvm::PatternMatch::match(), llvm::ISD::SELECT, llvm::ISD::SETCC, and llvm::TargetTransformInfo::TCK_RecipThroughput.

Referenced by getSpliceCost().

◆ getCostOfKeepingLiveOverCall()

| InstructionCost AArch64TTIImpl::getCostOfKeepingLiveOverCall | ( | ArrayRef< Type * > | Tys | ) |

Definition at line 3347 of file AArch64TargetTransformInfo.cpp.

References CostKind, getMemoryOpCost(), I, and llvm::TargetTransformInfo::TCK_RecipThroughput.

◆ getExtractWithExtendCost()

| InstructionCost AArch64TTIImpl::getExtractWithExtendCost | ( | unsigned | Opcode, |

| Type * | Dst, | ||

| VectorType * | VecTy, | ||

| unsigned | Index | ||

| ) |

Definition at line 2698 of file AArch64TargetTransformInfo.cpp.

References assert(), CostKind, llvm::BasicTTIImplBase< AArch64TTIImpl >::DL, getCastInstrCost(), llvm::VectorType::getElementType(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost(), llvm::TargetLoweringBase::getValueType(), getVectorInstrCost(), llvm::TargetLoweringBase::isTypeLegal(), llvm_unreachable, llvm::TargetTransformInfo::None, and llvm::TargetTransformInfo::TCK_RecipThroughput.

◆ getGatherScatterOpCost()

| InstructionCost AArch64TTIImpl::getGatherScatterOpCost | ( | unsigned | Opcode, |

| Type * | DataTy, | ||

| const Value * | Ptr, | ||

| bool | VariableMask, | ||

| Align | Alignment, | ||

| TTI::TargetCostKind | CostKind, | ||

| const Instruction * | I = nullptr |

||

| ) |

Definition at line 3180 of file AArch64TargetTransformInfo.cpp.

References CostKind, llvm::BasicTTIImplBase< AArch64TTIImpl >::getGatherScatterOpCost(), llvm::InstructionCost::getInvalid(), getMaxNumElements(), getMemoryOpCost(), llvm::ElementCount::getScalable(), getSVEGatherScatterOverhead(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost(), I, isElementTypeLegalForScalableVector(), isLegalMaskedGatherScatter(), Ptr, and useNeonVector().

◆ getGISelRematGlobalCost()

|

inline |

Definition at line 357 of file AArch64TargetTransformInfo.h.

◆ getInlineCallPenalty()

| unsigned AArch64TTIImpl::getInlineCallPenalty | ( | const Function * | F, |

| const CallBase & | Call, | ||

| unsigned | DefaultCallPenalty | ||

| ) | const |

Definition at line 291 of file AArch64TargetTransformInfo.cpp.

References CallPenaltyChangeSM, F, InlineCallPenaltyChangeSM, and llvm::SMEAttrs::requiresSMChange().

◆ getInterleavedMemoryOpCost()

| InstructionCost AArch64TTIImpl::getInterleavedMemoryOpCost | ( | unsigned | Opcode, |

| Type * | VecTy, | ||

| unsigned | Factor, | ||

| ArrayRef< unsigned > | Indices, | ||

| Align | Alignment, | ||

| unsigned | AddressSpace, | ||

| TTI::TargetCostKind | CostKind, | ||

| bool | UseMaskForCond = false, |

||

| bool | UseMaskForGaps = false |

||

| ) |

Definition at line 3311 of file AArch64TargetTransformInfo.cpp.

References assert(), CostKind, llvm::BasicTTIImplBase< AArch64TTIImpl >::DL, llvm::VectorType::get(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getInterleavedMemoryOpCost(), llvm::InstructionCost::getInvalid(), llvm::AArch64TargetLowering::getNumInterleavedAccesses(), llvm::AArch64TargetLowering::isLegalInterleavedAccessType(), and llvm::Type::isScalableTy().

◆ getIntImmCost() [1/2]

| InstructionCost AArch64TTIImpl::getIntImmCost | ( | const APInt & | Imm, |

| Type * | Ty, | ||

| TTI::TargetCostKind | CostKind | ||

| ) |

Calculate the cost of materializing the given constant.

Definition at line 349 of file AArch64TargetTransformInfo.cpp.

References llvm::APInt::ashr(), assert(), getIntImmCost(), llvm::Type::getPrimitiveSizeInBits(), llvm::APInt::getSExtValue(), llvm::Type::isIntegerTy(), and llvm::APInt::sextOrTrunc().

◆ getIntImmCost() [2/2]

| InstructionCost AArch64TTIImpl::getIntImmCost | ( | int64_t | Val | ) |

Calculate the cost of materializing a 64-bit value.

This helper method might only calculate a fraction of a larger immediate. Therefore it is valid to return a cost of ZERO.

Definition at line 334 of file AArch64TargetTransformInfo.cpp.

References llvm::AArch64_IMM::expandMOVImm(), Insn, and llvm::AArch64_AM::isLogicalImmediate().

Referenced by getIntImmCost(), getIntImmCostInst(), and getIntImmCostIntrin().

◆ getIntImmCostInst()

| InstructionCost AArch64TTIImpl::getIntImmCostInst | ( | unsigned | Opcode, |

| unsigned | Idx, | ||

| const APInt & | Imm, | ||

| Type * | Ty, | ||

| TTI::TargetCostKind | CostKind, | ||

| Instruction * | Inst = nullptr |

||

| ) |

Definition at line 374 of file AArch64TargetTransformInfo.cpp.

References assert(), CostKind, getIntImmCost(), llvm::Type::getPrimitiveSizeInBits(), Idx, llvm::Type::isIntegerTy(), llvm::TargetTransformInfo::TCC_Basic, and llvm::TargetTransformInfo::TCC_Free.

◆ getIntImmCostIntrin()

| InstructionCost AArch64TTIImpl::getIntImmCostIntrin | ( | Intrinsic::ID | IID, |

| unsigned | Idx, | ||

| const APInt & | Imm, | ||

| Type * | Ty, | ||

| TTI::TargetCostKind | CostKind | ||

| ) |

Definition at line 443 of file AArch64TargetTransformInfo.cpp.

References assert(), CostKind, getIntImmCost(), llvm::Type::getPrimitiveSizeInBits(), Idx, llvm::Type::isIntegerTy(), llvm::TargetTransformInfo::TCC_Basic, and llvm::TargetTransformInfo::TCC_Free.

◆ getIntrinsicInstrCost()

| InstructionCost AArch64TTIImpl::getIntrinsicInstrCost | ( | const IntrinsicCostAttributes & | ICA, |

| TTI::TargetCostKind | CostKind | ||

| ) |

Definition at line 509 of file AArch64TargetTransformInfo.cpp.

References llvm::any_of(), llvm::CallingConv::C, CostKind, llvm::CostTableLookup(), llvm::ISD::CTPOP, llvm::BasicTTIImplBase< AArch64TTIImpl >::DL, llvm::SmallVectorBase< Size_T >::empty(), llvm::VectorType::get(), llvm::IntrinsicCostAttributes::getArgs(), llvm::IntrinsicCostAttributes::getArgTypes(), getArithmeticInstrCost(), llvm::IntrinsicCostAttributes::getID(), llvm::Type::getIntNTy(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getIntrinsicInstrCost(), getIntrinsicInstrCost(), llvm::TargetTransformInfo::getOperandInfo(), llvm::IntrinsicCostAttributes::getReturnType(), llvm::EVT::getScalarSizeInBits(), llvm::MVT::getScalarSizeInBits(), llvm::Type::getScalarType(), llvm::EVT::getSimpleVT(), llvm::TargetLoweringBase::getTypeConversion(), llvm::EVT::getTypeForEVT(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost(), llvm::TargetLoweringBase::getValueType(), Idx, llvm::TargetTransformInfo::OperandValueInfo::isConstant(), llvm::Type::isIntegerTy(), llvm::EVT::isSimple(), llvm::TargetTransformInfo::OperandValueInfo::isUniform(), isUnpackedVectorVT(), llvm::MVT::isVector(), llvm::ConstantInt::isZero(), RetTy, llvm::SmallVectorBase< Size_T >::size(), llvm::TargetTransformInfo::TCC_Free, and llvm::TargetLoweringBase::TypeLegal.

Referenced by getIntrinsicInstrCost(), and getMinMaxReductionCost().

◆ getMaskedMemoryOpCost()

| InstructionCost AArch64TTIImpl::getMaskedMemoryOpCost | ( | unsigned | Opcode, |

| Type * | Src, | ||

| Align | Alignment, | ||

| unsigned | AddressSpace, | ||

| TTI::TargetCostKind | CostKind | ||

| ) |

Definition at line 3156 of file AArch64TargetTransformInfo.cpp.

References CostKind, llvm::InstructionCost::getInvalid(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getMaskedMemoryOpCost(), llvm::ElementCount::getScalable(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost(), and useNeonVector().

◆ getMaxInterleaveFactor()

| unsigned AArch64TTIImpl::getMaxInterleaveFactor | ( | ElementCount | VF | ) |

Definition at line 3361 of file AArch64TargetTransformInfo.cpp.

References llvm::AArch64Subtarget::getMaxInterleaveFactor().

◆ getMaxNumElements()

|

inline |

Try to return an estimate cost factor that can be used as a multiplier when scalarizing an operation for a vector with ElementCount VF.

For scalable vectors this currently takes the most pessimistic view based upon the maximum possible value for vscale.

Definition at line 151 of file AArch64TargetTransformInfo.h.

References llvm::details::FixedOrScalableQuantity< LeafTy, ValueTy >::getFixedValue(), llvm::details::FixedOrScalableQuantity< LeafTy, ValueTy >::getKnownMinValue(), and llvm::details::FixedOrScalableQuantity< LeafTy, ValueTy >::isScalable().

Referenced by getArithmeticReductionCost(), and getGatherScatterOpCost().

◆ getMemoryOpCost()

| InstructionCost AArch64TTIImpl::getMemoryOpCost | ( | unsigned | Opcode, |

| Type * | Src, | ||

| MaybeAlign | Alignment, | ||

| unsigned | AddressSpace, | ||

| TTI::TargetCostKind | CostKind, | ||

| TTI::OperandValueInfo | OpInfo = {TTI::OK_AnyValue, TTI::OP_None}, |

||

| const Instruction * | I = nullptr |

||

| ) |

Definition at line 3218 of file AArch64TargetTransformInfo.cpp.

References llvm::CallingConv::C, CostKind, llvm::BasicTTIImplBase< AArch64TTIImpl >::DL, llvm::SmallVectorBase< Size_T >::empty(), llvm::Type::getContext(), llvm::InstructionCost::getInvalid(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getMemoryOpCost(), llvm::ElementCount::getScalable(), llvm::EVT::getScalarSizeInBits(), llvm::Type::getScalarSizeInBits(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost(), llvm::TargetLoweringBase::getValueType(), llvm::EVT::getVectorElementType(), llvm::EVT::getVectorNumElements(), llvm::EVT::getVectorVT(), llvm::isPowerOf2_32(), llvm::Type::isPtrOrPtrVectorTy(), llvm::NextPowerOf2(), llvm::SmallVectorImpl< T >::pop_back_val(), llvm::SmallVectorTemplateBase< T, bool >::push_back(), llvm::TargetTransformInfo::TCK_CodeSize, llvm::TargetTransformInfo::TCK_RecipThroughput, llvm::TargetTransformInfo::TCK_SizeAndLatency, and useNeonVector().

Referenced by getCostOfKeepingLiveOverCall(), and getGatherScatterOpCost().

◆ getMinMaxReductionCost()

| InstructionCost AArch64TTIImpl::getMinMaxReductionCost | ( | Intrinsic::ID | IID, |

| VectorType * | Ty, | ||

| FastMathFlags | FMF, | ||

| TTI::TargetCostKind | CostKind | ||

| ) |

Definition at line 3617 of file AArch64TargetTransformInfo.cpp.

References CostKind, llvm::Type::getContext(), getIntrinsicInstrCost(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getMinMaxReductionCost(), llvm::EVT::getTypeForEVT(), and llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost().

◆ getMinPageSize()

|

inline |

Definition at line 427 of file AArch64TargetTransformInfo.h.

◆ getMinTripCountTailFoldingThreshold()

|

inline |

Definition at line 361 of file AArch64TargetTransformInfo.h.

◆ getMinVectorRegisterBitWidth()

|

inline |

Definition at line 135 of file AArch64TargetTransformInfo.h.

◆ getNumberOfRegisters()

Definition at line 111 of file AArch64TargetTransformInfo.h.

References llvm::Vector.

◆ getOrCreateResultFromMemIntrinsic()

| Value * AArch64TTIImpl::getOrCreateResultFromMemIntrinsic | ( | IntrinsicInst * | Inst, |

| Type * | ExpectedType | ||

| ) |

Definition at line 3478 of file AArch64TargetTransformInfo.cpp.

References llvm::CallBase::arg_size(), llvm::IRBuilderBase::CreateInsertValue(), llvm::PoisonValue::get(), llvm::CallBase::getArgOperand(), llvm::IntrinsicInst::getIntrinsicID(), and llvm::Value::getType().

◆ getPeelingPreferences()

| void AArch64TTIImpl::getPeelingPreferences | ( | Loop * | L, |

| ScalarEvolution & | SE, | ||

| TTI::PeelingPreferences & | PP | ||

| ) |

Definition at line 3473 of file AArch64TargetTransformInfo.cpp.

References llvm::BasicTTIImplBase< AArch64TTIImpl >::getPeelingPreferences().

◆ getPopcntSupport()

| TargetTransformInfo::PopcntSupportKind AArch64TTIImpl::getPopcntSupport | ( | unsigned | TyWidth | ) |

Definition at line 495 of file AArch64TargetTransformInfo.cpp.

References assert(), llvm::isPowerOf2_32(), llvm::TargetTransformInfo::PSK_FastHardware, and llvm::TargetTransformInfo::PSK_Software.

◆ getPreferredTailFoldingStyle()

|

inline |

Definition at line 365 of file AArch64TargetTransformInfo.h.

References llvm::DataAndControlFlow, llvm::DataAndControlFlowWithoutRuntimeCheck, and llvm::DataWithoutLaneMask.

◆ getRegisterBitWidth()

| TypeSize AArch64TTIImpl::getRegisterBitWidth | ( | TargetTransformInfo::RegisterKind | K | ) | const |

Definition at line 2136 of file AArch64TargetTransformInfo.cpp.

References EnableFixedwidthAutovecInStreamingMode, EnableScalableAutovecInStreamingMode, llvm::TypeSize::getFixed(), llvm::AArch64Subtarget::getMinSVEVectorSizeInBits(), llvm::TypeSize::getScalable(), llvm::AArch64Subtarget::isNeonAvailable(), llvm::AArch64Subtarget::isSVEAvailable(), llvm_unreachable, llvm::TargetTransformInfo::RGK_FixedWidthVector, llvm::TargetTransformInfo::RGK_ScalableVector, and llvm::TargetTransformInfo::RGK_Scalar.

◆ getScalarizationOverhead()

| InstructionCost AArch64TTIImpl::getScalarizationOverhead | ( | VectorType * | Ty, |

| const APInt & | DemandedElts, | ||

| bool | Insert, | ||

| bool | Extract, | ||

| TTI::TargetCostKind | CostKind | ||

| ) |

Definition at line 2838 of file AArch64TargetTransformInfo.cpp.

References CostKind, llvm::VectorType::getElementType(), llvm::InstructionCost::getInvalid(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getScalarizationOverhead(), llvm::AArch64Subtarget::getVectorInsertExtractBaseCost(), llvm::Type::isFloatingPointTy(), and llvm::APInt::popcount().

◆ getScalingFactorCost()

| InstructionCost AArch64TTIImpl::getScalingFactorCost | ( | Type * | Ty, |

| GlobalValue * | BaseGV, | ||

| int64_t | BaseOffset, | ||

| bool | HasBaseReg, | ||

| int64_t | Scale, | ||

| unsigned | AddrSpace | ||

| ) | const |

Return the cost of the scaling factor used in the addressing mode represented by AM for this target, for a load/store of the specified type.

If the AM is supported, the return value must be >= 0. If the AM is not supported, it returns a negative value.

Definition at line 4155 of file AArch64TargetTransformInfo.cpp.

References llvm::TargetLoweringBase::AddrMode::BaseGV, llvm::TargetLoweringBase::AddrMode::BaseOffs, llvm::BasicTTIImplBase< AArch64TTIImpl >::DL, llvm::TargetLoweringBase::AddrMode::HasBaseReg, llvm::BasicTTIImplBase< AArch64TTIImpl >::isLegalAddressingMode(), and llvm::TargetLoweringBase::AddrMode::Scale.

◆ getShuffleCost()

| InstructionCost AArch64TTIImpl::getShuffleCost | ( | TTI::ShuffleKind | Kind, |

| VectorType * | Tp, | ||

| ArrayRef< int > | Mask, | ||

| TTI::TargetCostKind | CostKind, | ||

| int | Index, | ||

| VectorType * | SubTp, | ||

| ArrayRef< const Value * > | Args = std::nullopt, |

||

| const Instruction * | CxtI = nullptr |

||

| ) |

Definition at line 3818 of file AArch64TargetTransformInfo.cpp.

References llvm::all_of(), llvm::any_of(), CostKind, llvm::CostTableLookup(), llvm::drop_begin(), llvm::enumerate(), llvm::VectorType::get(), llvm::VectorType::getElementCount(), llvm::VectorType::getElementType(), llvm::ElementCount::getFixed(), llvm::details::FixedOrScalableQuantity< LeafTy, ValueTy >::getKnownMinValue(), llvm::getPerfectShuffleCost(), llvm::Type::getScalarSizeInBits(), llvm::Type::getScalarType(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getShuffleCost(), getShuffleCost(), getSpliceCost(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost(), llvm::Value::hasOneUse(), llvm::BasicTTIImplBase< AArch64TTIImpl >::improveShuffleKindFromMask(), llvm::ShuffleVectorInst::isInterleaveMask(), isLegalBroadcastLoad(), llvm::isUZPMask(), llvm::isZIPMask(), N, llvm::PoisonMaskElem, llvm::SmallVectorTemplateBase< T, bool >::push_back(), llvm::TargetTransformInfo::SK_Broadcast, llvm::TargetTransformInfo::SK_ExtractSubvector, llvm::TargetTransformInfo::SK_InsertSubvector, llvm::TargetTransformInfo::SK_PermuteSingleSrc, llvm::TargetTransformInfo::SK_PermuteTwoSrc, llvm::TargetTransformInfo::SK_Reverse, llvm::TargetTransformInfo::SK_Select, llvm::TargetTransformInfo::SK_Splice, llvm::TargetTransformInfo::SK_Transpose, llvm::TargetTransformInfo::TCK_CodeSize, and llvm::Value::user_begin().

Referenced by getShuffleCost().

◆ getSpliceCost()

| InstructionCost AArch64TTIImpl::getSpliceCost | ( | VectorType * | Tp, |

| int | Index | ||

| ) |

Definition at line 3762 of file AArch64TargetTransformInfo.cpp.

References assert(), llvm::CmpInst::BAD_ICMP_PREDICATE, CostKind, llvm::CostTableLookup(), getCastInstrCost(), getCmpSelInstrCost(), llvm::Type::getContext(), llvm::VectorType::getElementCount(), llvm::InstructionCost::getInvalid(), llvm::AArch64TargetLowering::getPromotedVTForPredicate(), llvm::ElementCount::getScalable(), llvm::EVT::getSimpleVT(), llvm::EVT::getTypeForEVT(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getTypeLegalizationCost(), llvm::TargetTransformInfo::None, llvm::TargetTransformInfo::SK_Splice, and llvm::TargetTransformInfo::TCK_RecipThroughput.

Referenced by getShuffleCost().

◆ getStoreMinimumVF()

|

inline |

Definition at line 418 of file AArch64TargetTransformInfo.h.

References llvm::BasicTTIImplBase< AArch64TTIImpl >::getStoreMinimumVF(), llvm::Type::isIntegerTy(), and llvm::isPowerOf2_32().

◆ getTgtMemIntrinsic()

| bool AArch64TTIImpl::getTgtMemIntrinsic | ( | IntrinsicInst * | Inst, |

| MemIntrinsicInfo & | Info | ||

| ) |

Definition at line 3514 of file AArch64TargetTransformInfo.cpp.

References llvm::CallBase::arg_size(), llvm::CallBase::getArgOperand(), llvm::IntrinsicInst::getIntrinsicID(), and Info.

◆ getUnrollingPreferences()

| void AArch64TTIImpl::getUnrollingPreferences | ( | Loop * | L, |

| ScalarEvolution & | SE, | ||

| TTI::UnrollingPreferences & | UP, | ||

| OptimizationRemarkEmitter * | ORE | ||

| ) |

Definition at line 3417 of file AArch64TargetTransformInfo.cpp.

References llvm::TargetTransformInfo::UnrollingPreferences::DefaultUnrollRuntimeCount, EnableFalkorHWPFUnrollFix, F, llvm::AArch64Subtarget::Falkor, getCalledFunction(), getFalkorUnrollingPreferences(), llvm::AArch64Subtarget::getProcFamily(), llvm::BasicTTIImplBase< AArch64TTIImpl >::getUnrollingPreferences(), I, llvm::TargetTransformInfoImplBase::isLoweredToCall(), llvm::AArch64Subtarget::Others, llvm::TargetTransformInfo::UnrollingPreferences::Partial, llvm::TargetTransformInfo::UnrollingPreferences::PartialOptSizeThreshold, llvm::TargetTransformInfo::UnrollingPreferences::PartialThreshold, llvm::TargetTransformInfo::UnrollingPreferences::Runtime, llvm::TargetTransformInfo::UnrollingPreferences::UnrollAndJam, llvm::TargetTransformInfo::UnrollingPreferences::UnrollAndJamInnerLoopThreshold, llvm::TargetTransformInfo::UnrollingPreferences::UnrollRemainder, and llvm::TargetTransformInfo::UnrollingPreferences::UpperBound.

◆ getVectorInstrCost() [1/2]

| InstructionCost AArch64TTIImpl::getVectorInstrCost | ( | const Instruction & | I, |

| Type * | Val, | ||

| TTI::TargetCostKind | CostKind, | ||

| unsigned | Index | ||

| ) |

Definition at line 2831 of file AArch64TargetTransformInfo.cpp.

References I.

◆ getVectorInstrCost() [2/2]

| InstructionCost AArch64TTIImpl::getVectorInstrCost | ( | unsigned | Opcode, |

| Type * | Val, | ||

| TTI::TargetCostKind | CostKind, | ||

| unsigned | Index, | ||

| Value * | Op0, | ||

| Value * | Op1 | ||

| ) |

Definition at line 2822 of file AArch64TargetTransformInfo.cpp.

Referenced by getExtractWithExtendCost().

◆ getVScaleForTuning()

|

inline |

Definition at line 139 of file AArch64TargetTransformInfo.h.

◆ instCombineIntrinsic()

| std::optional< Instruction * > AArch64TTIImpl::instCombineIntrinsic | ( | InstCombiner & | IC, |

| IntrinsicInst & | II | ||

| ) | const |

Definition at line 1944 of file AArch64TargetTransformInfo.cpp.

References llvm::BasicTTIImplBase< AArch64TTIImpl >::DL, llvm::IntrinsicInst::getIntrinsicID(), instCombineConvertFromSVBool(), instCombineLD1GatherIndex(), instCombineMaxMinNM(), instCombineRDFFR(), instCombineST1ScatterIndex(), instCombineSVEAllOrNoActive(), instCombineSVECmpNE(), instCombineSVECntElts(), instCombineSVECondLast(), instCombineSVEDup(), instCombineSVEDupqLane(), instCombineSVEDupX(), instCombineSVELast(), instCombineSVELD1(), instCombineSVEPTest(), instCombineSVESDIV(), instCombineSVESel(), instCombineSVESrshl(), instCombineSVEST1(), instCombineSVETBL(), instCombineSVEUnpack(), instCombineSVEUzp1(), instCombineSVEVectorAdd(), instCombineSVEVectorFAdd(), instCombineSVEVectorFAddU(), instCombineSVEVectorFSub(), instCombineSVEVectorFSubU(), instCombineSVEVectorFuseMulAddSub(), instCombineSVEVectorMul(), instCombineSVEVectorSub(), and instCombineSVEZip().

◆ isElementTypeLegalForScalableVector()

Definition at line 241 of file AArch64TargetTransformInfo.h.

References llvm::Type::isBFloatTy(), llvm::Type::isDoubleTy(), llvm::Type::isFloatTy(), llvm::Type::isHalfTy(), llvm::Type::isIntegerTy(), and llvm::Type::isPointerTy().

Referenced by getGatherScatterOpCost(), isLegalMaskedGatherScatter(), isLegalMaskedLoadStore(), and isLegalToVectorizeReduction().

◆ isExtPartOfAvgExpr()

| bool AArch64TTIImpl::isExtPartOfAvgExpr | ( | const Instruction * | ExtUser, |

| Type * | Dst, | ||

| Type * | Src | ||

| ) |

Definition at line 2256 of file AArch64TargetTransformInfo.cpp.

References llvm::Add, llvm::BasicTTIImplBase< AArch64TTIImpl >::DL, llvm::Instruction::getOpcode(), llvm::TargetLoweringBase::getValueType(), llvm::Value::hasOneUse(), llvm::TargetLoweringBase::isTypeLegal(), llvm::PatternMatch::m_c_Add(), llvm::PatternMatch::m_Instruction(), llvm::PatternMatch::m_SpecificInt(), llvm::PatternMatch::m_Value(), llvm::PatternMatch::m_ZExtOrSExt(), and llvm::PatternMatch::match().

Referenced by getCastInstrCost().

◆ isLegalBroadcastLoad()

|

inline |

Definition at line 299 of file AArch64TargetTransformInfo.h.

References llvm::details::FixedOrScalableQuantity< LeafTy, ValueTy >::getFixedValue(), llvm::Type::getScalarSizeInBits(), and llvm::details::FixedOrScalableQuantity< LeafTy, ValueTy >::isScalable().

Referenced by getShuffleCost().

◆ isLegalMaskedGather()

Definition at line 291 of file AArch64TargetTransformInfo.h.

References isLegalMaskedGatherScatter().

◆ isLegalMaskedGatherScatter()

Definition at line 278 of file AArch64TargetTransformInfo.h.

References llvm::Type::getScalarType(), and isElementTypeLegalForScalableVector().

Referenced by getGatherScatterOpCost(), isLegalMaskedGather(), and isLegalMaskedScatter().

◆ isLegalMaskedLoad()

Definition at line 270 of file AArch64TargetTransformInfo.h.

References isLegalMaskedLoadStore().

◆ isLegalMaskedLoadStore()

Definition at line 258 of file AArch64TargetTransformInfo.h.

References llvm::Type::getPrimitiveSizeInBits(), llvm::Type::getScalarType(), and isElementTypeLegalForScalableVector().

Referenced by isLegalMaskedLoad(), and isLegalMaskedStore().

◆ isLegalMaskedScatter()

Definition at line 295 of file AArch64TargetTransformInfo.h.

References isLegalMaskedGatherScatter().

◆ isLegalMaskedStore()

Definition at line 274 of file AArch64TargetTransformInfo.h.

References isLegalMaskedLoadStore().

◆ isLegalNTLoad()

Definition at line 337 of file AArch64TargetTransformInfo.h.

References llvm::TargetTransformInfoImplBase::isLegalNTLoad(), and isLegalNTStoreLoad().

◆ isLegalNTStore()

Definition at line 333 of file AArch64TargetTransformInfo.h.

References isLegalNTStoreLoad().

◆ isLegalNTStoreLoad()

Definition at line 316 of file AArch64TargetTransformInfo.h.

References llvm::TargetTransformInfoImplBase::isLegalNTStore(), and llvm::isPowerOf2_64().

Referenced by isLegalNTLoad(), and isLegalNTStore().

◆ isLegalToVectorizeReduction()

| bool AArch64TTIImpl::isLegalToVectorizeReduction | ( | const RecurrenceDescriptor & | RdxDesc, |

| ElementCount | VF | ||

| ) | const |

Definition at line 3586 of file AArch64TargetTransformInfo.cpp.

References llvm::Add, llvm::And, llvm::FAdd, llvm::FAnyOf, llvm::FMax, llvm::FMin, llvm::FMulAdd, llvm::RecurrenceDescriptor::getRecurrenceKind(), llvm::RecurrenceDescriptor::getRecurrenceType(), llvm::IAnyOf, llvm::Type::isBFloatTy(), isElementTypeLegalForScalableVector(), llvm::details::FixedOrScalableQuantity< LeafTy, ValueTy >::isScalable(), llvm::Or, llvm::SMax, llvm::SMin, llvm::UMax, llvm::UMin, and llvm::Xor.

◆ isVScaleKnownToBeAPowerOfTwo()

|

inline |

Definition at line 143 of file AArch64TargetTransformInfo.h.

◆ preferPredicatedReductionSelect()

|

inline |

Definition at line 383 of file AArch64TargetTransformInfo.h.

◆ preferPredicateOverEpilogue()

| bool AArch64TTIImpl::preferPredicateOverEpilogue | ( | TailFoldingInfo * | TFI | ) |

Definition at line 4114 of file AArch64TargetTransformInfo.cpp.

References llvm::LoopBase< BlockT, LoopT >::blocks(), containsDecreasingPointers(), llvm::Disabled, llvm::LoopVectorizationLegality::getFixedOrderRecurrences(), llvm::LoopVectorizationLegality::getLoop(), llvm::LoopVectorizationLegality::getPredicatedScalarEvolution(), llvm::LoopVectorizationLegality::getReductionVars(), llvm::AArch64Subtarget::getSVETailFoldingDefaultOpts(), llvm::InterleavedAccessInfo::hasGroups(), llvm::TailFoldingInfo::IAI, llvm::TailFoldingInfo::LVL, llvm::Recurrences, llvm::Reductions, llvm::Reverse, llvm::Simple, llvm::MapVector< KeyT, ValueT, MapType, VectorType >::size(), llvm::SmallPtrSetImplBase::size(), SVETailFoldInsnThreshold, and TailFoldingOptionLoc.

◆ prefersVectorizedAddressing()

| bool AArch64TTIImpl::prefersVectorizedAddressing | ( | ) | const |

Definition at line 3151 of file AArch64TargetTransformInfo.cpp.

◆ shouldConsiderAddressTypePromotion()

| bool AArch64TTIImpl::shouldConsiderAddressTypePromotion | ( | const Instruction & | I, |

| bool & | AllowPromotionWithoutCommonHeader | ||

| ) |

See if I should be considered for address type promotion.

We check if I is a sext with right type and used in memory accesses. If it used in a "complex" getelementptr, we allow it to be promoted without finding other sext instructions that sign extended the same initial value. A getelementptr is considered as "complex" if it has more than 2 operands.

Definition at line 3559 of file AArch64TargetTransformInfo.cpp.

References llvm::Type::getInt64Ty(), and I.

◆ shouldExpandReduction()

|

inline |

Definition at line 355 of file AArch64TargetTransformInfo.h.

◆ shouldMaximizeVectorBandwidth()

| bool AArch64TTIImpl::shouldMaximizeVectorBandwidth | ( | TargetTransformInfo::RegisterKind | K | ) | const |

Definition at line 324 of file AArch64TargetTransformInfo.cpp.

References assert(), llvm::AArch64Subtarget::isNeonAvailable(), llvm::TargetTransformInfo::RGK_FixedWidthVector, and llvm::TargetTransformInfo::RGK_Scalar.

◆ shouldTreatInstructionLikeSelect()

| bool AArch64TTIImpl::shouldTreatInstructionLikeSelect | ( | const Instruction * | I | ) |

Definition at line 4177 of file AArch64TargetTransformInfo.cpp.

References EnableOrLikeSelectOpt, I, and llvm::TargetTransformInfoImplBase::shouldTreatInstructionLikeSelect().

◆ simplifyDemandedVectorEltsIntrinsic()

| std::optional< Value * > AArch64TTIImpl::simplifyDemandedVectorEltsIntrinsic | ( | InstCombiner & | IC, |

| IntrinsicInst & | II, | ||

| APInt | DemandedElts, | ||

| APInt & | UndefElts, | ||

| APInt & | UndefElts2, | ||

| APInt & | UndefElts3, | ||

| std::function< void(Instruction *, unsigned, APInt, APInt &)> | SimplifyAndSetOp | ||

| ) | const |

Definition at line 2109 of file AArch64TargetTransformInfo.cpp.

References llvm::IntrinsicInst::getIntrinsicID().

◆ supportsScalableVectors()

|

inline |

Definition at line 376 of file AArch64TargetTransformInfo.h.

◆ useNeonVector()

Definition at line 3214 of file AArch64TargetTransformInfo.cpp.

References llvm::AArch64Subtarget::useSVEForFixedLengthVectors().

Referenced by getGatherScatterOpCost(), getMaskedMemoryOpCost(), and getMemoryOpCost().

The documentation for this class was generated from the following files:

- lib/Target/AArch64/AArch64TargetTransformInfo.h

- lib/Target/AArch64/AArch64TargetTransformInfo.cpp

Public Member Functions inherited from

Public Member Functions inherited from